Finally, some companies are exploring edge computing technologies specifically designed for automated AI-based analysis of large sets of financial data (e.g., millions or billions of rows). For example, a company might use deep learning to develop models that identify specific risk factors associated with future revenue growth or creditworthiness of customers.ģ. Another trend is using deep learning techniques to create more sophisticated models that can handle complex business scenarios better than traditional artificial neural networks. This can help automate tasks such as detecting fraudulent activities or forecasting financial trends.Ģ.

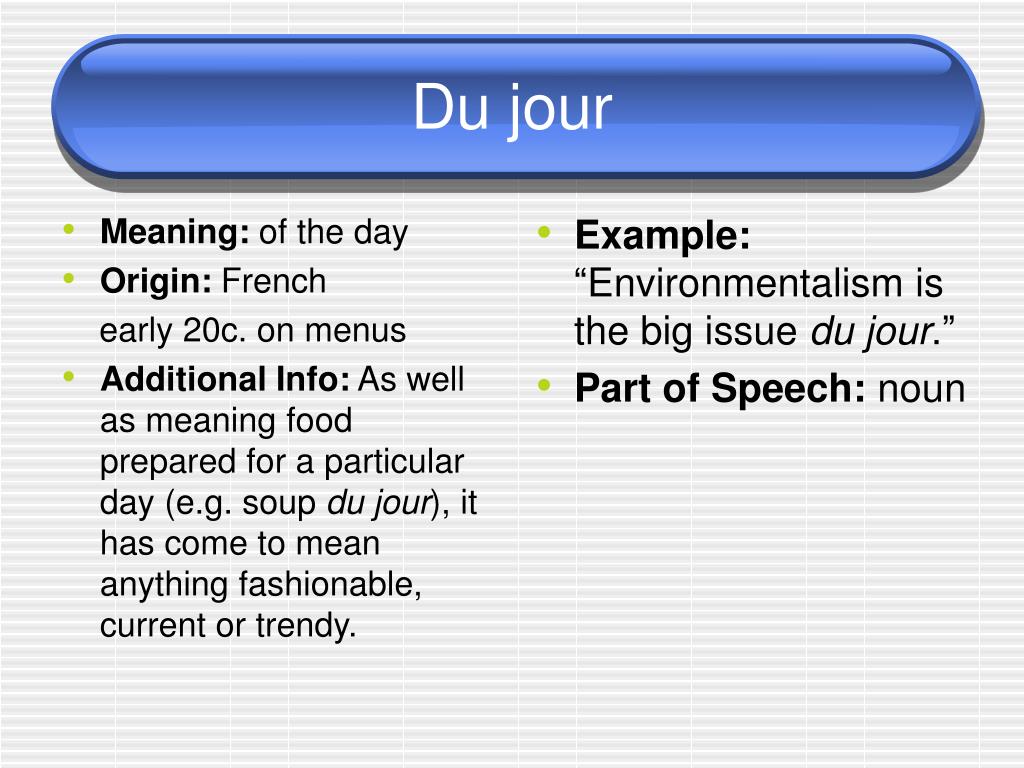

One popular trend in artificial neural networks for accounting and finance companies is incorporating machine learning algorithms to automatically learn from data, optimize performance, and improve accuracy. Trends in Artificial Neural Networks for Accounting & Finance Companiesġ. It is time AI stopped being modern Alchemy and took concrete steps toward laying the foundation of a science of intelligence.Q. 60 years later the term has become a bucket into which people throw anything they like to get it to agree upon terms and goals and at set out guidelines and guard rails. How about this? The term Artificial Intelligence was coined at the Dartmouth Conference in 1956 and defined its initial scope and direction. I agree that they are dangerous today but my personal take is that getting the government to stick its oar in the water at this time is the last thing the industry needs, it will get it wrong like it gets everything wrong and just muddy the waters more. They are not “giant minds,” by the way, just giant collections of organized data that can be queried for statistical pattern matches. But it will soon run out of steam on its own. I don’t think anyone is going to be successful stopping the language model juggernaut for a while, not even Elon Musk. But this time a technology that can deliver the reality of machine comprehension is already here: New Sapience and Synthetic Intelligence. When the current bubble deflates will we see a new AI Winter? Chatbots provide the illusion of machine comprehension of language and the world, and illusions soon wear thin. Belief in AI plummeted and there ensued a decade now known as the AI Winter. People looked around to see if some new AI technology was on the horizon that would deliver on the promises after all, but there was nothing. (Early investors who pump up bubbles always do well). It didn’t happen and the last investors in lost a lot of money. Then as now the market was told AI was on the verge of changing the way we live and our world would never be the same. Its first wave, Symbolic AI, also gave birth to speculative investment. Speculators love frothy investments markets. A week later Microsoft’s inaugural demo was also shown to be “making stuff up” and the company lost over $20 billion in market cap. When Bard was shown to be putting out false answers in it’s very first demo, Google lost over $100 billion in market cap in one day. Google released Bard AI, its ChatGPT competitor, a week after Microsoft’s ChatGPT Bing demo. OpenAI, maker of ChatGPT, was recently valued at $29 billion as Microsoft made a massive $10 billion investment. Sorry – no AGI.Īrticle reference: #artificialintelligence #microsoft #gpt #chatgpt Matching question language patterns with answer language patterns based on statistics without comprehending either the question or the answer is an extremely narrow skill. Answering a bunch of questions gleaned from text patterns in law books doesn’t add up to the “human-level” performance needed to try a case in court. Language models have been beating humans as question-answerers since IBM’s Watson beat Ken Jennings in Jeopardy in 2011. Faux pas literally means 'false step' in French, and thats a great description of what you do when you make a faux pas. GPT-4 has been celebrated for getting high scores on a law examination.

GTP-4 has no knowledge, no ideas to communicate it is a mindless algorithm. That is, we use language to communicate ideas, our knowledge. Human-level performance in what, text generation? There is a reason it is called “text generation.” Humans don’t generate text, we speak, or we write. "Moreover, in all of these tasks, GPT-4's performance is strikingly close to human-level performance, and often vastly surpasses prior models such as ChatGPT."

How can anyone assert that a program has a mastery of language when demonstrably, it cannot comprehend a single sentence in the sense a 5-year-old human can? Slicing and dicing text from a giant dataset of text originally written by and for humans may or may not result in a useful output, but it is fundamentally different from intelligence as humans recognize it in themselves which is what AGI is supposed to be about.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed